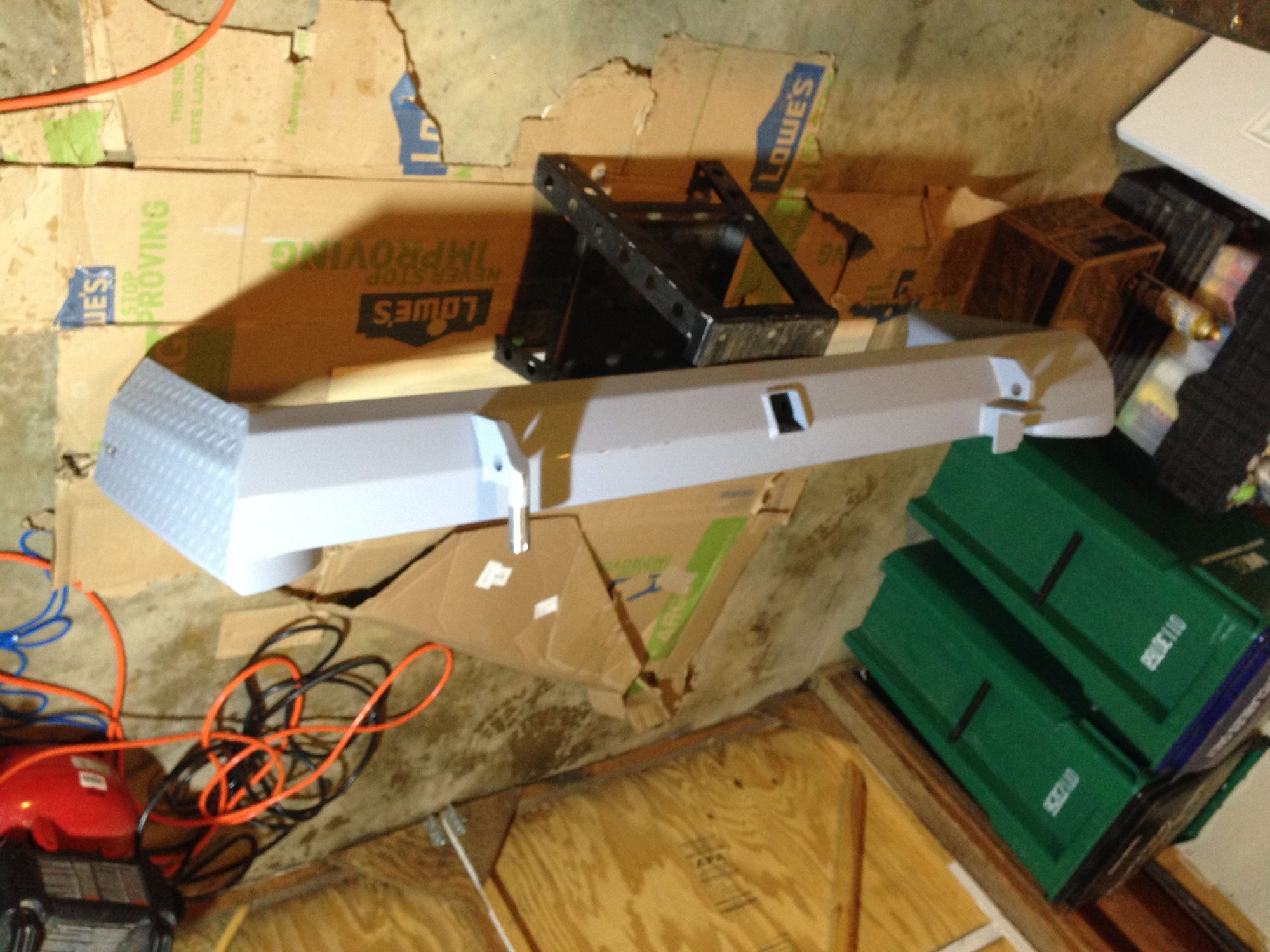

Detours offroad hardware4/9/2023

The points in Figure 11c,d are color-coded by intensity, and the root feature can be clearly distinguished near the center of each figure. Additionally, LIDAR simulations of the scene with two of the sensors discussed in this work are shown in Figure 11c,d as a demonstration of the capability of the LIDAR simulation to scan a complex scene in real-time. A digital rendering of the synthetic scene is shown in Figure 11b. The resulting scene contained 24,564 grass and tree meshes for a total of 604,488,350 triangular faces. The scene was augmented with randomly placed models of grass and trees. The resulting surface mesh contained over 22 million triangular faces and was approximately 2 m by 3 m by 0.4 m in height. The geometry of the root feature was measured by developing a structure mesh from a sequence of 268 digital images. The feature, shown in Figure 11a, is a large vertical step in an off-road trail with a tree root acting as a natural embankment. In order to demonstrate how the simulator could be used in real world scenarios, a highly detailed digital scene was developed based on a unique off-road terrain feature.

This qualitative agreement provides indication that our simulation is valid for Velodyne sensors interacting with vegetation. Comparing our Figure 8b to Figure 4 from, it is clear that the simulation reproduces both of these features of the distance distribution. First, the broadening of the distribution due to the extended nature of the object, and second the bi-modal nature of the distribution due to some returns from the trunk and others from the foliage. In the original experiments, two main features of the range distribution were observed. All distance measurements which returned from the shrub were binned into a histogram, which is shown in Figure 8b. The sensor was moved along a quarter-circle arc at a distance of 8 m in 1 degree increments, for a total of 91 measurement locations. In our simulated experiment, the sensor was placed at a distance of 8 m from the shrub shown in Figure 8a and extracted points from one rotation of the sensor on the 5 Hz setting. All three cases show good qualitative agreement of the simulation with the expected results. Finally, the simulation is compared to previously published controlled field experiments. Next, simulation results are compared to previously published laboratory experiments. In the first case, the results of the simulation are compared to an analytical model. To this end, three validation cases are presented. Therefore, in order to validate the simulation, is of primary importance to qualitatively reproducing well-known LIDAR-vegetation interaction phenomenon. The simulation is not optimized for a particular model of LIDAR sensor or type of environment. In the sections above, the development of a generalized LIDAR simulation that captures the interaction between LIDAR and vegetation was presented. Accuracy requirements must be defined by the user, and this creates well-known difficulties when generating synthetic sensor data for autonomous vehicle simulations. The requirements for validation of physics-based simulation can vary in rigor depending on the application. The results demonstrate the potential for using the simulation in the development and testing of algorithms for autonomous off-road navigation. As a demonstration of the simulator’s capability, we show an example of the simulator being used to evaluate autonomous navigation through vegetation. We present a multi-step qualitative validation of the simulator, which includes the development of an improved statistical model for the range distribution of LIDAR returns in grass. In this work, we outline the development of a real-time, physics-based LIDAR simulator for densely vegetated environments that can be used in the development of LIDAR processing algorithms for off-road autonomous navigation.

While other areas of autonomy have benefited from the use of simulation, there has not been a real-time LIDAR simulator that accounted for LIDAR–vegetation interaction. Many off-road navigation systems rely on LIDAR to sense and classify the environment, but LIDAR sensors often fail to distinguish navigable vegetation from non-navigable solid obstacles. However, off-road navigation in unstructured environments continues to challenge autonomous ground vehicles. Machine learning techniques have accelerated the development of autonomous navigation algorithms in recent years, especially algorithms for on-road autonomous navigation.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed